Hello, I want to use vision for the external position estimation. I have set the Parameters EKF2_AID_MASK in QGroundControl,which of the bits in position 3 to enable vision position fusion, and the bits in position 4 to enable vision yaw fusion.

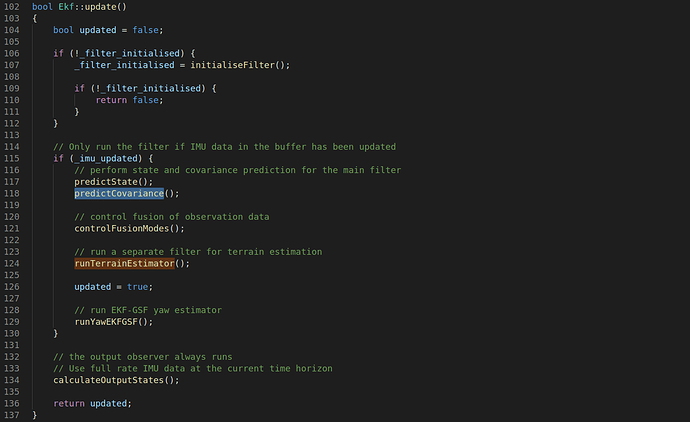

I get the pose estimation from my vision algorithm. Then I publish the position and orientation data via the publish node “/mavros/vision_pose/pose”, I have flyed in a strong magnetic shielded environments,I can see the yaw from QGroundControl is not right, So I put the drone static,But I find the yaw pointer from the QGroundControl have rotation,I subscribe the topic of /mavros/imu/data ,/mavros/local_postion/pose,and /aloam_high_frec(which is my vision algorithm caculated pose node),I find the yaw data of /mavros/local_position/pose is almost same to the mavros/imu/data, So I want to know What could be the cause of this problem,I read the Firmware/src/module/ekf_main.cpp and the Firmware-master/src/lib/ecl/EKF/ekf.cpp,I can’t find the implementation of the predictCovriance function, the runYawEKFGSF function and the controlFusionModes function,

I also want to know the principle of ekf2 program realization,I have read the paper of a linear kalman Filter for MARG Orientation Estimation Using the Algebric Quaternion Algorithm and the Estimation of IMU and MARG orientation using a gradient descent algorithm, I have understand the pose estimation of MARG sensor,But I want to know more about the fusion of the vision and the MARG sensor ,So Where can I find references for the pose estimation program in EKF2?

My email address is 1930739@tongji.edu.cn, I am very pleasure of your reply. Thank you very much!