CNN or convolutional neural network is the cornerstone of all computer vision tasks. Even in the case of object detection, convolution operations are used to extract the pattern of an object from an image to a feature graph (basically a matrix smaller than the image size). Now from the last few years, there has been a lot of research on object detection tasks, and we have a lot of the most advanced algorithms or methods.

Key technologies:

Object detection is now a massive, semester-long topic. It consists of many algorithms. Therefore, to keep it short, target detection algorithms are divided into various categories, such as region-based algorithms (RCNN, fast-RCNN, ftP-RCNN), two-level detectors, and first-level detectors, where region-based algorithms themselves are part of the two-level detectors, but we will briefly explain them below. So we mention them explicitly. Let’s start with RCNN (region-based convolutional Neural Network).

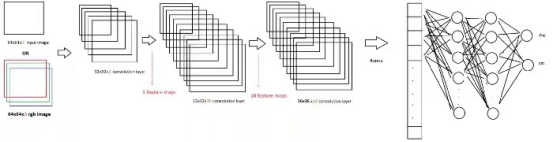

The basic architecture of target detection algorithm consists of two parts. This part consists of a CNN, which converts the original image information into feature graph. In the next part, different algorithms have different technologies. Therefore, in the case of RCNN, it uses selective search to obtain ROI (area of interest), that is, where there are likely to be different objects. Approximately 2000 regions are extracted from each image. It uses these ROIs to classify labels and predict object locations using two different models. So these models are called two-stage detectors.

RCNN has some limitations, in order to overcome these limitations, they proposed Fast RCNN. RCNN has high computation time because each region is passed to CNN separately, and it uses three different models for prediction. Therefore, in Fast RCNN, each image is transmitted to CNN only once and the feature graph is extracted. Selective searches are used on these maps to generate predictions. Combine all three models used in RCNN.

However, Fast RCNN still uses slow selective search, so the calculation time is still very long. Guess they came up with another version that makes sense, the faster RCNN. Faster RCNN replaces the selective search method with a regional proposal network, making the algorithm Faster. Now let’s turn to some disposable detectors. YOLO and SSD are very well known object detection models because they offer a very good trade-off between speed and accuracy

YOLO: A single neural network predicts bounding box and category probability directly from the complete image in a single evaluation. Because the entire detection pipeline is a single network, end-to-end optimization can be performed directly on detection performance

Single Shot Detector (SSD) : The SSD method discretes the output space of bounding boxes into a set of default boxes with different aspect ratios. After discretization, the method scales according to the position of feature graph. The Single Shot Detector network combines predictions from multiple feature graphs with different resolutions to naturally process objects of various sizes.